AI can clone a framework in a week. That's not the problem

A working Next.js clone in one week. A C-compiler built autonomously in two. The headlines have been hard to miss, and honestly, the achievements are impressive. But the coverage is missing the more important story – one that's going to affect every business that builds on open source software.

Anthropic published how Claude Code built a C compiler autonomously for $20,000 in tokens. A couple of weeks later, Cloudflare rebuilt the most popular React framework from scratch in a week for $1,100.

Both projects are great. Even though the C compiler had 37 years of GCC torture tests and an actual working version to verify against, and vinext was built on Next.js's own comprehensive public test suite, the achievements are still very impressive.

The industry reacted, as always, with loud headlines, developer anxiety, and then large parts of the community downplaying the whole thing. What got lost in the noise is a different problem – one that these stories have quietly exposed. AI is changing the open source ecosystem, and that has real implications for any business that builds on it.

Fragmentation

Before vinext, reimplementing a major framework would have been a multi-year engineering project that most companies wouldn't even consider. This year, Cloudflare did it in a week for $1,100. It's an impressive result, but more importantly it sets a precedent for other companies to try something like this themselves.

For businesses, this can create a real problem. Fragmented ecosystems mean thinner talent pools, less community knowledge, and a higher risk of choosing a technology that quietly loses momentum. Tech debt has always been a risk. The conditions for creating it are accelerating.

The maintainer crisis

The open source software that modern development depends on is maintained by small numbers of people, often volunteers, who are now drowning in AI-generated noise.

curl's bug bounty programme was closed this year because genuine vulnerability reports had dropped from 15% of all submissions to 5% – the rest was AI-generated slop[1] . GitHub opened a community discussion in February acknowledging "a critical issue affecting the open source community: the increasing volume of low-quality contributions that is creating significant operational challenges for maintainers."[2]

The people keeping these projects alive are burning out. That's a risk for anyone whose software depends on them.

The broken trust model

The review process in open source depends on an implicit assumption: the person submitting code understands what they've written. That assumption is breaking down. One Microsoft engineer who maintains several open source projects summarised it plainly[3] : the review trust model is broken, AI-generated PRs can look structurally fine but be logically wrong, and line-by-line review is still mandatory for anything shipped – but it no longer scales. Review burden is higher than it was before AI, not lower.

The security gap – where it gets concrete

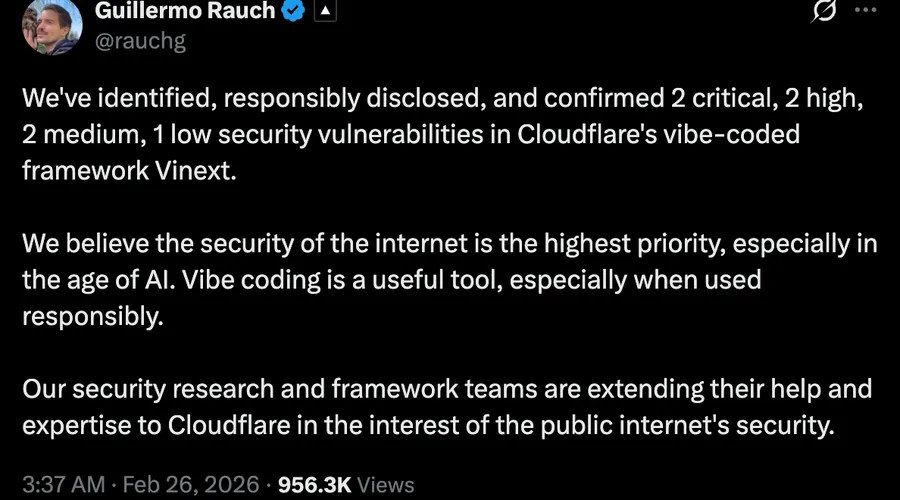

This is where the vinext story gets its second act. Within two days of the release, Vercel's CEO Guillermo Rauch disclosed seven security vulnerabilities in the new framework – two of them critical. His post called it "a vibe-coded framework." An independent security researcher using AI-based scanning tools then found 45 vulnerabilities, 24 of which were manually validated.

The reason matters. The Hacktron researcher put it well[4] : the model's objective is not "be secure" – it's "pass the tests." Vulnerabilities don't live in functional test cases. They live in the negative space between them: subtle interactions between layers, parser differentials, edge cases nobody thought to write a test for. Common flaws included server-side request forgery, broken authentication flows, and missing security headers – the kinds of subtle mistakes that don't show up immediately but create real exposure in production.

This is the real implication of AI-generated clones: you can replicate documented behaviour without inheriting the security fixes that came from years of people finding and patching bugs in the original.

The protection response – and the unintended consequences

Some projects are already responding by closing off. Tldraw closed external contributions entirely[5] . Others are considering similar moves. And then there's SQLite, which has kept its primary test harness – TH3 – completely private for years[6] . The SQLite team is explicit about why: they don't want their most effective fuzzing tools to become widely accessible.

If AI can clone a framework using its public test suite as a specification, the logical defensive response is to make the test suite private. But that cuts off legitimate contributors too, and it means cloned versions are more likely to reintroduce bugs the original project spent years fixing. The protection mechanism creates its own fragility.

This is a strategy problem, not a technical one

The solution isn't "stop using AI." The point here is different: the conditions that make technology choices risky have changed, and the decisions that follow from them need to catch up.

- Tech stack decisions are now strategic business decisions. This was always true, but the rate at which ecosystems can fragment has changed. A framework with a healthy community today might look very different in two years if AI-assisted clones fragment the talent pool or if maintainer burnout hollows out the core team. The cost of getting stuck with poorly-supported technology – slower delivery, harder hiring, growing technical debt – is a business cost, not just a technical one. Businesses that understand this and make deliberate, well-informed choices will have an advantage over ones that chase whatever's newest.

- Watch the health of the projects you depend on. How many paid maintainers does this project have? Who funds it? What does the contribution graph look like? Is the community growing or fragmenting? These are now due-diligence questions, not optional extras.

- Use AI to give back, not just to take. AI makes it easier to understand complex codebases, trace bugs to their source, and write well-targeted fixes. The answer to the AI slop problem isn't avoiding AI – it's using it with understanding and intention. If your business depends on an open source project, contributing meaningfully to it (with or without AI assistance) is a form of infrastructure investment.

- The pace of change means staying informed is itself a competitive advantage. The businesses that navigate this well won't necessarily be the fastest adopters. They'll be the ones paying attention – reading the primary sources, understanding what's actually happening in the ecosystem, and making deliberate choices rather than following the hype.